What can global education learn from global health? That was the topic of a recent article on the Center for Education Innovations website (the content, of which, I later discovered, was largely drawn from a roundtable in New York back in September with the same title). “I disagree with approximately all of this article,” I Tweeted snarkily after reading it. But Lee Crawfurd told me I couldn’t throw shade without explaining myself, so here goes.

First, the notion that “the sheer scale of the problem” somehow sets global education apart from global health (or indeed other sectors) strikes me as a strange starting point in thinking about what we can learn. For one thing, I’m not sure it’s possible to place completely different societal problems on some common scale of difficulty. But supposing we do agree to treat development as a morbid game of Top Trumps: what are the categories that make education a winner? That “57 million children around the world do not go to school” is indeed a tragedy; that in low income countries 1 in 14 still die before they are even old enough to go to school is too.

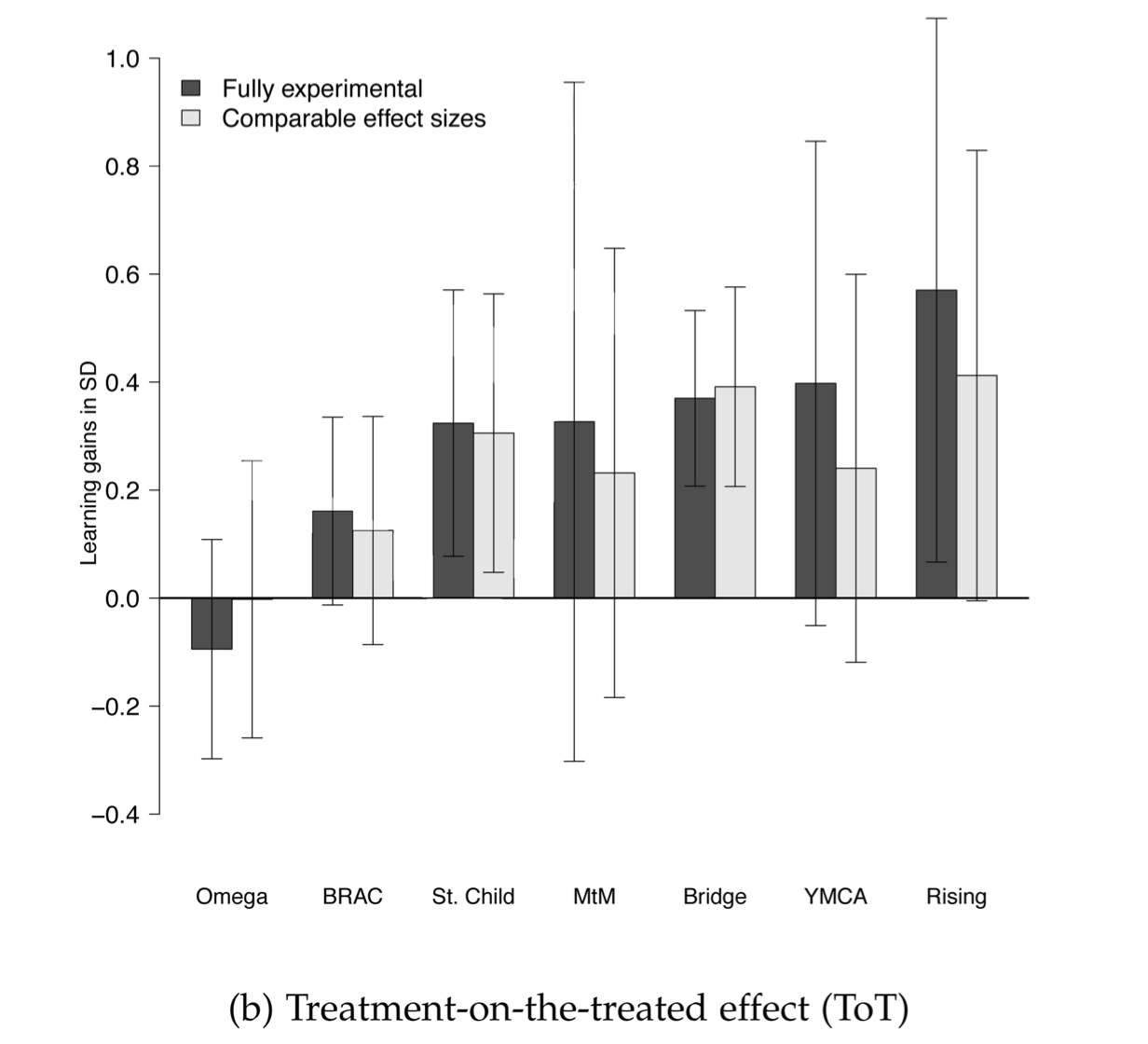

Second, the argument that learning is harder to measure than health outcomes, or can only be measured over decades, baffles me. We have some immensely reliable measures of children’s learning. They are called standardized tests. And before you say it, no I don’t think that everything that’s important about education can be captured in a standardized test. I do think that some important things can be captured in a standardized test, particularly where they are low stakes for the student and particularly where baseline levels of learning are extremely low, as they are in the countries worst affected by the global learning crisis. Nor is it impossible to see important changes in learning outcomes occur in relatively short periods of time. Take Partnership Schools for Liberia: whatever your views on the programme, it’s clear from the midline report of the RCT that it achieved significant gains in learning outcomes in a single academic year. Indeed, if you’re nerdy enough to read the fine print of the midline report, you'll see that the evaluators found some learning gains could already be detected in the early weeks of the programme.* As Lant Pritchett has argued, development is a complex modernization process, some features of which – like good governance and pluralism and human rights – really are hard to measure precisely. Education is not one of them.

While we’re on assessment, let’s dispense with two of the other arguments offered here: first, the idea that assessment methods in well-developed education systems inhibit the development of higher-order thinking skills is debatable, but I’m happy to concede there’s a discussion to be had there. But when it comes to the countries bearing the brunt of the global learning crisis, I’ve seen no evidence to suggest that their assessment systems – as problematic as they may be in some instances – are really the binding constraint to addressing the problem of shockingly low learning levels. If you don’t believe me, read Tessa Bold and co’s brilliant study of teacher quality and behaviour across seven countries covering 40% of Africa’s school age population and ask whether any of those findings would be ameliorated by a different assessment system. Second, can we please stop saying that the problem is “we’re teaching kids what was useful 100 years ago”? The problem is too often we’re not teaching kids what was useful 100 years ago, and would still be useful to them today, and for that matter will still be useful in 25 years even when we’re all out of a job and our snarky blogposts are being automatically generated by backflipping AI robots.

Third, I’m sceptical that the issue is a lack of awareness about “best practices” on the part of policy-makers or local implementers. I come back to Tessa Bold’s work: the puzzle is not that policy-makers are doing the ‘wrong’ things so much as that they are doing the ‘right’ things and finding that they don’t work:

"Over the last 15 years, more than 200 randomized controlled trials have been conducted in the area of education. However, the literature has yet to converge to a consensus among researchers about the most effective ways to increase the quality of primary education, as recent systematic reviews demonstrate. In particular, our findings help in understanding both the effect sizes of interventions shown to raise students’ test scores, and the reasons why some well-intentioned policy experiments have not significantly impacted learning outcomes. At the core is the interdependence between teacher effort, ability, and skills in generating high quality education."

Fourth, I don’t disagree that there are stark differences in the ‘ecosystems’ around global education and global health, though perhaps these differences – as the UK government’s Multilateral Aid Review ratings seem to suggest – are more about quality than quantity. But the word ‘ecosystem’ suggests a degree of harmony and coherence that masks very real strategic tensions and debates within global health – in particular, between the ‘vertical’ funders like GAVI and the Global Fund and more traditional actors focusing on ‘horizontal’ system-strengthening work. To crudely caricature a big and (very) long-running debate, the narrow focus of the vertical funders and their prioritisation of a few specific capabilities over long-term institution building seem to have reduced the variability of their programming, allowing them to more consistently deliver on their objectives. Critics counter that this has come at a big cost: in the long-term, making countries dependent on constant injections of outside cash and capability rather than building permanent, high-performing institutions that can raise health outcomes for all; in the short-term, neglecting diseases that fall outside their focus area, even if they have a dramatic impact on public health. To take an extreme example, there is no Global Fund for Preventing Road Traffic Accidents, even though in many countries they now kill more people than malaria.

What’s interesting is that the education ecosystem has put its eggs fully in the “systems-strengthening” basket. The model of the Global Partnership for Education, for example, is essentially that developing countries develop an approved sector plan and GPE funds it, in line with development effectiveness principles like 'country ownership'. Of course, international donors also do their own programming, some of it working more directly through government systems and some of it less. But none of this is on anything like the scale of the global health verticals. Can you imagine, for instance, a Global Fund for Early Years Education that took the same mass-scale, vertically integrated approach to fixing the problem of poor quality/non-existent early years’ provision that GAVI has for vaccines? In other words, differences in ecosystems are also differences about strategy, and the question is whether some of the strategies pursued by the global health community are actually available to the global education community.

Finally, there’s the money question. Is under-investment in education a barrier? 100% yes. Is it the barrier? The evidence says probably not: as the WDR notes, "the relationship between spending and learning outcomes is often weak."

More to the point, no one in this debate ever seems to ask why the money isn't flowing in global education the way it has in global health. To invest in raising the quality of education, whether as a domestic policy-maker or an international donor, you presumably have to believe three things: that raising the quality of education is important; that there is a reasonable chance your investment will yield the promised benefits; and that the benefits of the investment outweigh its costs (financial, political or otherwise).

To scan the official reports and Twitter feeds of some of the biggest influential players in the education ‘ecosystem’, you’d think that the first of these three was all that mattered. They are littered with factoids extolling the benefits of education: for the earnings of the educated, for GDP, for health, for women's reproductive rights, for the environment, and so on. The implication of this messaging is that policy-makers and donors don’t yet understand the returns to education. Really? An alternative analysis would be that policy-makers do understand the returns to education, but believe (rightly or wrongly) that the true, risk-adjusted returns are much lower. Perhaps they aren’t confident that any of the policies or programmes at their disposal will actually yield the results they want given the messy reality of implementation. Given the litany of seemingly promising policy interventions found not to work, or found to work but not to scale, that would not be an altogether unreasonable conclusion. Or perhaps they eschew reforms that genuinely would make a difference because they carry an unacceptable political cost. Either way, messages that don't address these types of concerns are likely to fall on deaf ears.

Which brings me to the 'p' word. What’s missing from this article is a proper discussion of politics. As the team at RISE have long argued, and the recent WDR has amplified, the underlying cause of the global learning crisis is that the way education systems are organized, funded and incentivized is not necessarily designed to lead to sustained improvements in the quality of learning (as opposed to, say, the quantity of schooling) - and it's often politics that keeps it that way.

To me, the most interesting question for global education folk to explore with their colleagues in global health is therefore the politics of reform: the reform coalitions and institutional strategies that have enabled such impressive progress in some areas; the reasons why these strategies proved successful in overcoming particular institutional challenges or binding in vested interests or circumventing potential veto players; but also the limits of these strategies to deliver change in other areas where a different political calculus held.

I’m all in favour of seeing what can be learned from other sectors, but as a wise man once said: if you miss the politics, you miss the point.

* See Romero, Sandefur and Sandholz (2017), p 24: "Students in treatment schools score higher at baseline than those in control schools by .076σ in math (p-value=.077) and .091σ in English (p-value=.049). There is some evidence that this imbalance is not simply due to “chance bias” in randomization, but rather a treatment effect that materialized in the weeks between the beginning of the school year and the baseline survey."